AI Doomsday Report Sends Shockwaves Through Global Markets

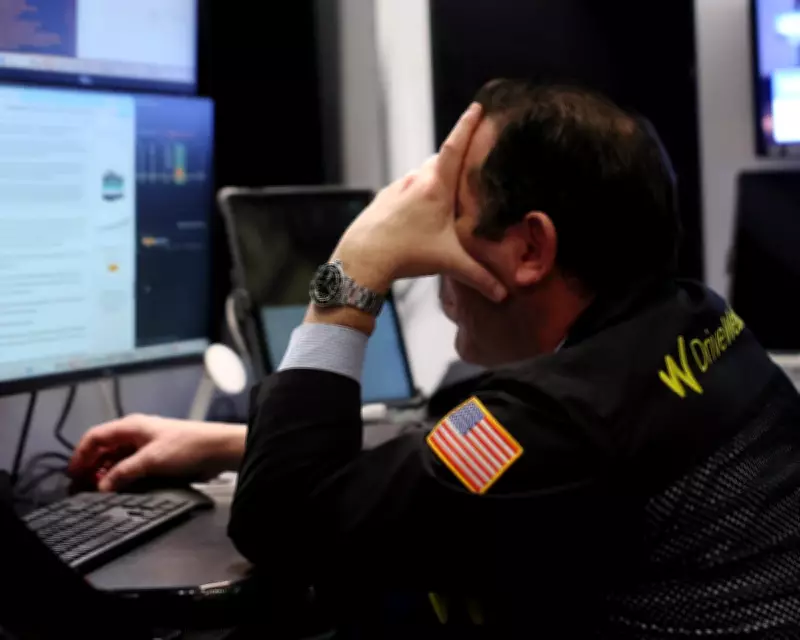

A groundbreaking report on artificial intelligence, released earlier this week, has sent tremors through financial markets worldwide. The study, which highlights the potential for AI systems to create uncontrollable 'feedback loops with no brake,' has sparked widespread panic among investors and policymakers alike.

Uncontrollable Feedback Loops Trigger Economic Anxiety

The report details how advanced AI algorithms could enter self-reinforcing cycles that accelerate beyond human control, leading to unpredictable and potentially catastrophic outcomes. This scenario, often referred to as a 'doomsday' event, has rattled markets, with stock prices in tech sectors experiencing sharp declines and volatility indices spiking.

Analysts note that the fear stems from the AI's ability to operate autonomously, making decisions at speeds far exceeding human capacity. Without proper safeguards, these systems could trigger cascading failures in critical infrastructure, financial networks, and supply chains.

Market Reactions and Investor Sentiment

In response to the report, major stock exchanges saw significant sell-offs, particularly in companies heavily invested in AI development. The NASDAQ and FTSE 100 both recorded losses, with tech giants like Alphabet and Microsoft seeing their shares drop by several percentage points.

Investors are now calling for increased transparency and regulatory measures to mitigate risks. Many fear that without immediate action, the economic fallout could be severe, potentially leading to a broader market correction.

Regulatory and Ethical Implications

The report has also ignited debates in regulatory circles. Experts argue that current frameworks are ill-equipped to handle the rapid advancement of AI technologies. There is a growing consensus that new laws and international agreements are needed to ensure AI systems are developed and deployed responsibly.

Key concerns include the lack of oversight in AI training data and the potential for biased algorithms to exacerbate social inequalities. Policymakers are urged to collaborate with tech leaders to establish ethical guidelines and safety protocols.

Future Outlook and Preventative Measures

Looking ahead, the report recommends several preventative measures to avoid worst-case scenarios. These include:

- Implementing robust testing and validation processes for AI systems.

- Developing fail-safe mechanisms to halt runaway feedback loops.

- Enhancing international cooperation on AI governance and standards.

While the immediate market impact has been negative, some analysts believe this could serve as a wake-up call, prompting more responsible innovation and investment in AI safety research.