Entertainment

Katie Price Returns to UK from Dubai Despite Missile Strike Claims

Katie Price arrived at Gatwick Airport on Saturday from Dubai, denying claims she was trapped due to missile strikes. She sported a casual look with a crop top and joggers.

Politics

Gemma Atkinson Reveals Family Plans with Gorka Marquez After Strictly Exit

Gemma Atkinson opens up about balancing motherhood, career, and life with fiancé Gorka Marquez after his Strictly exit, sharing their parenting dynamics and future plans.

Sports

McIlroy Digs at LIV Finances but Open to Player Returns to PGA Tour

Rory McIlroy criticizes LIV Golf's financial woes as Saudi fund pulls out, but says player returns would be 'good business' for the PGA Tour.

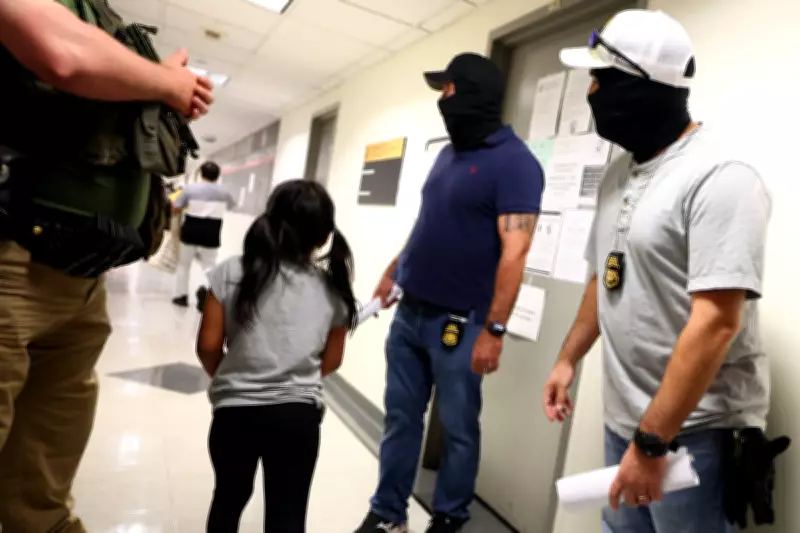

Crime

Pedestrian Killed After Being Sucked Into Frontier Airlines Engine at Denver Airport

A person was struck and killed by a Frontier Airlines jet at Denver International Airport, partially consumed by an engine, causing a fire and evacuation of 231 passengers.

Health

Weather

A Terrible Time for a Tractor Breakdown in Lincolnshire

A farmer in Brigg, Lincolnshire, faces a tractor breakdown at the worst possible time, disrupting birdfood sowing and a school visit, but the day ends with a positive outcome.

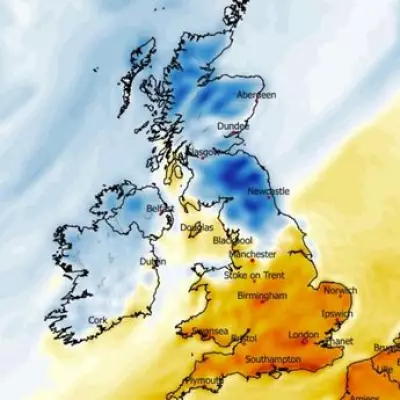

Met Office: 22C Saturday, 40 counties see above-average heat

The Met Office predicts temperatures up to 22C on Saturday, with 40 counties in England and Wales experiencing above-average heat. A north-south divide is expected.

Freak Storms and Waterspouts Batter Southern Spain

Southern Spain hit by flash floods and waterspouts as severe weather batters holiday hotspots. Residents stunned by marine tornadoes off La Manga.

Michigan Home Crushed by Tree Day After Sale

A house in St Clair Shores, Michigan, was severely damaged by a falling tree during a storm, just one day after being sold. The seller is expected to cover repairs.

Mount Dukono Eruption Kills Three, 20 Missing

Mount Dukono volcano in Indonesia erupted, spewing ash 10km high, killing three hikers and leaving 20 missing as search efforts continue.

Environment

Get Updates

Subscribe to our newsletter to receive the latest updates in your inbox!

We hate spammers and never send spam

Tech

UK Geography Weekend Special

Weekend special: 100 questions about UK geography. Perfect for students preparing for exams or anyone who loves learning about Britain's diverse landscapes.